To optimise a website for Google, do the first step – build an SEO strategy. This strategy consists of two parts – on-page and off-page SEO. You can do both at the same time or on-page first then off-page. However, working only on off-page SEO is something you shouldn’t do for a simple reason – it won’t give you any results if your tech SEO is not perfected. Therefore, starting to work SEO on a website means starting with the basics – and that is on-page SEO or tech SEO.

There are a lot of technicalities that need to be taken care of, especially when you start on a new website. Then, it is basically a tech SEO setup that you must conduct. In this case, little room is left for mistakes. You start with a clean slate and build the features one by one appropriately. You could even say this is easy to do.

On the other hand, starting to work on a website that had previously been optimised but not as it should have been optimally, is a tougher task. One reason is that you must search for, find and pinpoint the errors, problems and mistakes and find a suitable solution for them. And, sometimes looking for mistakes can lead to making other mistakes if you are not an expert or don’t know where to look.

The hardest of all tasks is to try to recover from black hat techniques, previously done on the website in order to make it rank higher. Or, if the site has been penalised due to practising such methods in the recent past.

So, the first thing to do is to find those mistakes and correct them. Neglecting them will only mean working for an empty cause since the results won’t show nevertheless how hard you try to build backlinks or have original content that engages the readers.

Let’s take a look at the top 6 Tech SEO Problems and the solutions for them.

Table of Contents

1. The Website Lacks HTTPS Security

There is no more off-putting thing for a website visitor to click on the website link and instead, to take them on the homepage, a grey or red background with a “non-secure” warning appears. It is proven that this warning immediately chases away the visitors as no one likes to leave their digital footprints on an unsecured place or, God forbid, to get infected by a virus.

That being said, the first thing to check is whether the website you are working on is HTTPS. Do it by typing the domain name into Google Chrome; if it says “secure” before the website, you have nothing to be afraid of. However, if it is not, the message will appear.

The Fix:

Convert the site to HTTPS by acquiring an SSL certificate from the Certificate Authority.

Install the certificate, and the site becomes secure immediately.

2. The Website Has NO XML Sitemaps

XML sitemaps are the roadmaps that show Google which are the important pages. They lead Google directly to them and helps the google bots to understand the pages well. This makes them highly important for the SEO rankings of the website.

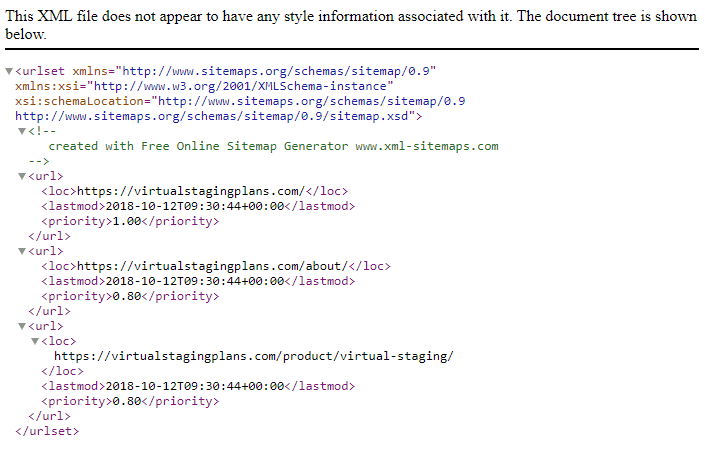

Check whether the website has XML websites by typing the domain name into Google and add “/sitemap.xml”

If there is a net of XML pages, something like this will appear:

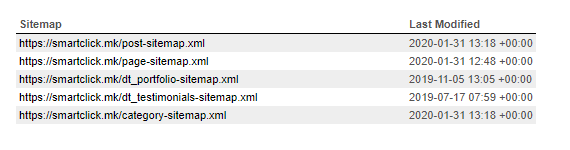

Or, another example:

The Fix:

If there is no such map, a 404 page will open. In this case, your next step is to create one yourself or have a web developer create one for you. You can do it by using an XML sitemap generating tool like the YOAST SEO plugin on WordPress, or use any other tool online.

3. The Website Is Very Slow

Another deal-breaker for the website visitor is if the website takes more than 3 seconds to load. If it does take more than 3 seconds, they will probably go to the competitors’ website. This fact makes the factor of site speed extremely important. And not only site speed matters to the website visitors and potential customers, but it also matters to Google.

Check the site speed by using Google PageSpeed Insights. This will detect any specific site problems if such exist. Check both the desktop and mobile performance of the site. Or, you can use any other tool online that tests the speed and gives additional insights. Make sure, though, that you understand the insights.

The Fix:

Even though the solution to this problem might be very complex (as it depends on many factors that can sometimes be hard even for the expert), here is an easy shortcut and solution. Optimise and compress all the images that are on the website, delete all cache, improve the server response time and check the JavaScript minifying. Eventually, you can speak to the web developer so that you can rest assured that the best possible solution for the site will be developed.

4. The Website Has Duplicate Content

Duplicate, and unoriginal content will pollute the website more than to help it look sophisticated and rank. What it does is confuse the search engine crawlers, and as a result, the correct content won’t be shown to the target audience.

Here is why duplicate content happens:

- Multiple versions of e-commerce shop items that have the same URL

- Repetitive content on the main page on printer-only we sites

- The same content is written in multiple languages on an international website.

The Fix:

Each problem mentioned above can be solved separately. Thus, in the first case, with the multiple versions, you should do proper Rel=Canonical. In the second case, you should use proper configuration, and in the last case, hreflang tags should be correctly implemented.

Google suggests another way around duplicate content, and it includes 301 redirects, limiting boilerplate content and top-level domains.

No alt tags on images mean missed SEO opportunities. The alt tag on images is used to describe the picture in words which help with SEO rankings as they tell the bot what the image is all about.

The Fix

Broken images are easily identified with SEP site audits. That is why you should regularly monitor the image content and always stay at the top with the current situation across the website.

6. The Website Has Broken Links

Broken links on the website will cheapen the experience of website visitors and make them go away without finding what they were looking for. Therefore, regular monitoring needs to be conducted to ensure that the links do not break in the meantime. You can do that by running regular site audits.

The Fix

Use one of the tools to do the site audit and replace all the broken links immediately once they are shown. Replace them with working links in their place and make sure that you make this a regular check, say every month or so.